Adneural: TRIBE v2 + MiroFish for Pre-Launch Ad Testing

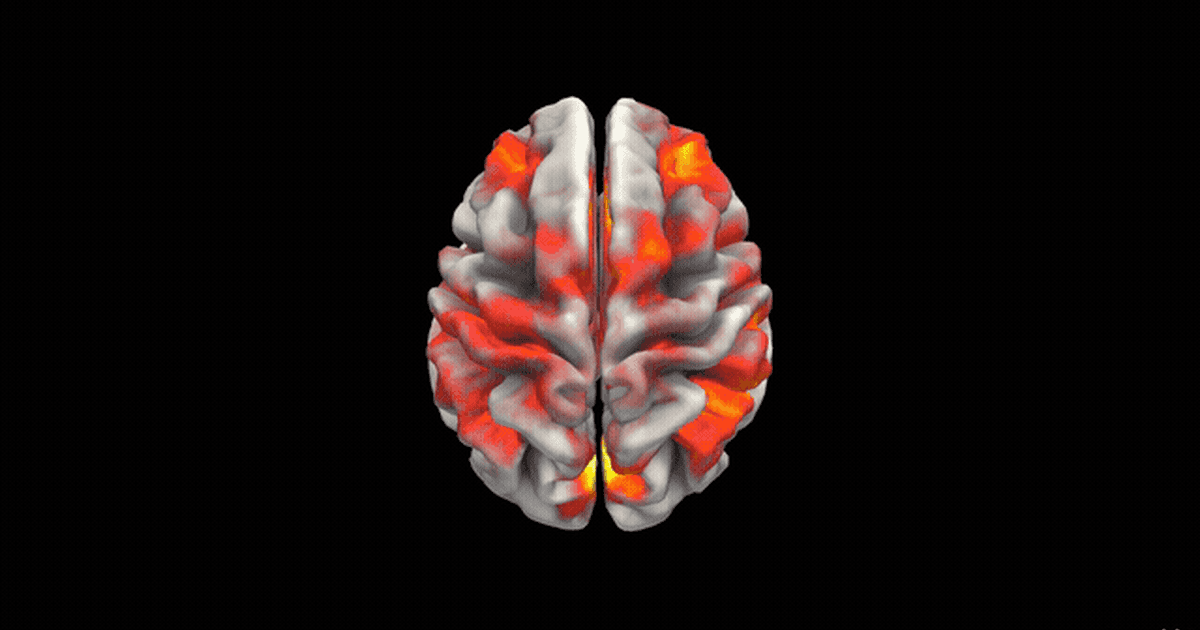

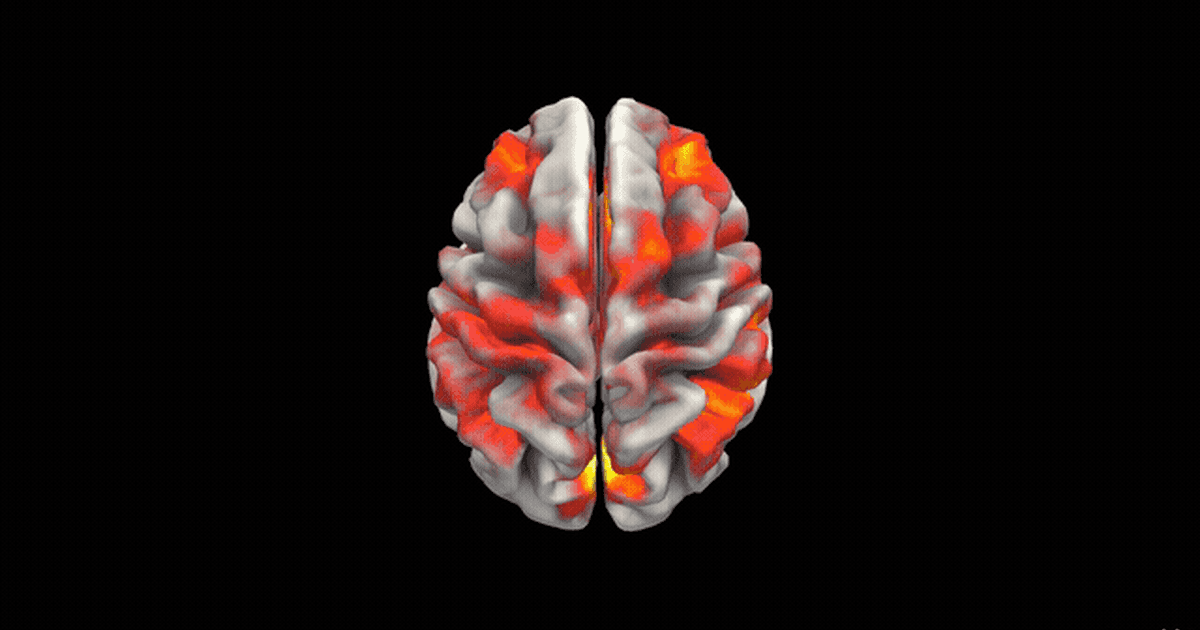

Adneural predicts how an ad will perform — neurologically and socially — before launch, combining Meta's TRIBE v2 fMRI encoder with a 200-agent MiroFish/OASIS simulation.

Adneural predicts how an ad will perform — neurologically and socially — before launch, combining Meta's TRIBE v2 fMRI encoder with a 200-agent MiroFish/OASIS simulation.

Retrofitting a handwriting generator with a polar-coordinate tokenizer and a 6-layer cross-attention GPT decoder — character accuracy jumps from 82% to 96% and the baseline-drift problem disappears.

A step-by-step walkthrough of building a realistic LSTM handwriting generator in PyTorch — mixture-density outputs, Gaussian attention over characters, style priming, and honest failure modes.

I was 1 of 50 ICT technicians chosen from ~15,000 applicants to support COP-29 in Baku. Tracking 1,000+ laptops, daily patches, and what it takes to run conference-scale IT at UN scale.

A small 2-layer LSTM beats a same-sized transformer at forecasting global temperatures from NASA GISTEMP. Here's why small recurrent models still earn a seat at the table.

Feedback after launching OmarAI — why short responses persisted, how I tuned the persona for depth, and a head-to-head of GPT-4o, Llama, MPT, and Falcon as the underlying model.

Shipping a chatbot UI inside my existing Next.js portfolio — why a Subframe template failed, why I split the backend into Python, and how I wired the FastAPI service into the frontend.

The fine-tuned GPT sounded like me but remembered nothing. I compared embeddings, multi-agent file search, and a simple JSON memory in a system prompt — here's why the JSON won.

How I collapsed 20,000 messy Instagram DM pairs into ~2,000 high-quality English conversations by using GPT-3.5 as a data curator — the prompt, the batch size, and the fine-tuning format.

How I turned years of Instagram DMs into a fine-tuning dataset for a personal AI chatbot — Instagram export structure, consecutive-message merging, and why short prompts made the model stub-respond.

Three months at Payriff: fusing bank data with mobile-provider signals to score thin-file applicants, and shipping a RAG chatbot that taught developers the Payriff API.

Building a production Next.js site for a youth-led NGO — sub-100KB JS, single-screen Stripe donation flow that doubled completion, and why content structure was harder than any of the code.

How I taught a computer to assemble jigsaw puzzles from a photo — piece segmentation, tab-vs-blank edge classification, and a greedy graph assembler that handles most puzzles I throw at it.