Adneural: TRIBE v2 + MiroFish for Pre-Launch Ad Testing

Adneural is a pre-launch ad testing platform that predicts how an ad will perform neurologically and socially — before you spend a dollar on media. It uses Meta's TRIBE v2 brain encoding model to predict voxel-level fMRI responses to a creative, then runs a 200-agent MiroFish / OASIS simulation to predict how people will talk about it. The platform ties the two signals together so you know why a simulated audience reacts the way they do — not just that they do.

TL;DR

- Neural half: TRIBE v2 (trained on 450+ hours of fMRI data, 720+ subjects) predicts brain response to the ad.

- Social half: 200 personality-diverse agents simulate discourse around the ad in Twitter- and Reddit-like environments.

- Bridge: LangGraph + Neo4j GraphRAG over 22 neuroscience papers ties every social cluster back to a neural cause.

Why pre-launch ad testing is broken

Brand teams test ads in focus groups and with panels after the ad is already made. That's expensive, slow, and late — by the time you realize the ad doesn't land, the budget is already burned on production. The "test before you launch" services on the market today are almost all survey-based. Adneural's bet is that we can give a more useful signal, faster, by combining two things that were previously kept separate: a foundation model of the brain, and a crowd simulator.

The neuromarketing market is projected to grow from $1.71B in 2025 to $2.62B by 2030. Most of that growth is platforms attempting to replace eye-tracking labs. Adneural is attempting to replace the lab entirely — a digital twin of a brain plus a digital twin of a crowd.

Half one — the brain side (TRIBE v2)

TRIBE v2 is Meta FAIR's second-generation trimodal brain encoder. It takes an image, video clip, audio recording, or text passage as input, and outputs a predicted fMRI response pattern across the whole brain. It was trained on 451.6 hours of fMRI data from 25 subjects across four naturalistic studies and evaluated across 1,117.7 hours of recordings from 720 subjects.

Internally, TRIBE v2 is a fusion of three pretrained encoders:

| Modality | Encoder | |---|---| | Text | LLaMA 3.2 | | Video | V-JEPA 2 | | Audio | Wav2Vec-BERT |

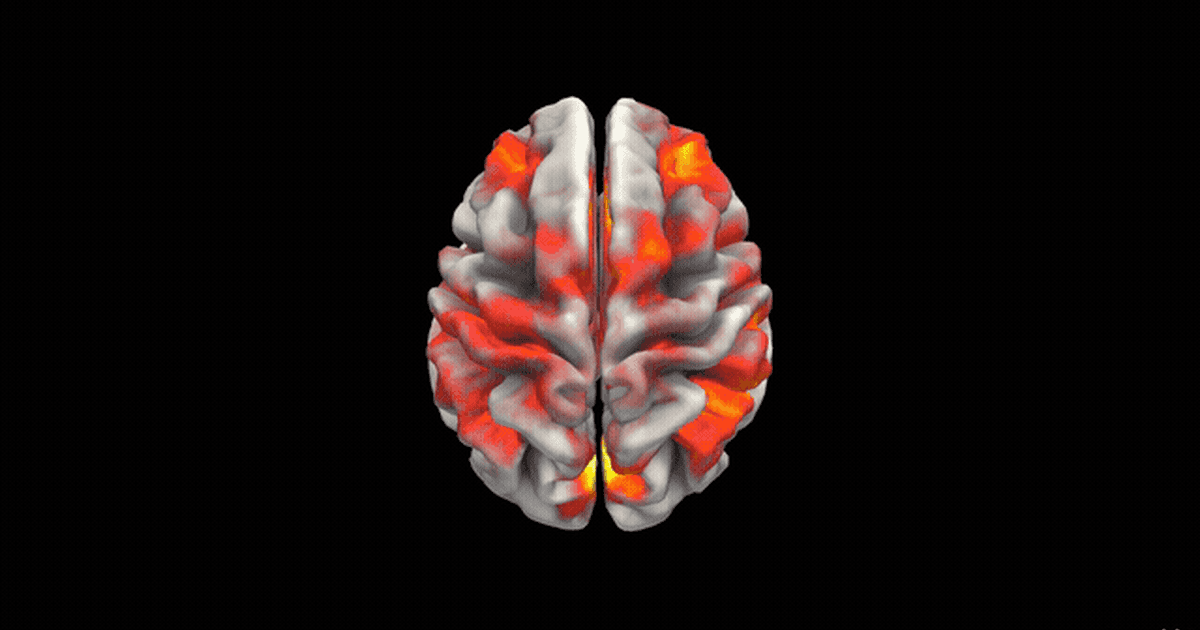

When Adneural feeds an ad in, TRIBE v2 returns predicted activity. Adneural scores that activity on a handful of meaningful axes — does this creative hit the attention network, does it hit the reward circuit, does it degrade interest after 5 seconds, does it engage memory encoding regions associated with long-term recall.

The Three.js brain visualization is where demos go quiet. Seeing a predicted fMRI heatmap pulse over a 3D brain while the ad plays is the moment people stop asking "why not just run a survey" and start asking "how fast can we get this in front of our team."

Half two — the social side (MiroFish + OASIS)

A neural signal is interesting, but the real question is what will people say about this ad. For that, Adneural uses MiroFish on top of the OASIS social simulation framework from CAMEL-AI. OASIS scales to one million agents and supports 23 different social actions — following, commenting, reposting, and more.

Adneural spins up 200 agents with distinct personality vectors:

- Big-Five trait scores (openness, conscientiousness, extraversion, agreeableness, neuroticism)

- Age bucket and interest embeddings

- Discourse style (terse, verbose, sarcastic, earnest)

The agents "see" the ad, react, and discuss it with each other in parallel Twitter-like and Reddit-like environments. MiroFish's ReportAgent then steps in to analyze how opinions shifted, which coalitions formed, and what patterns emerged — producing a structured prediction report.

The output is a discourse graph: nodes are reactions, edges are influence, clusters are reaction types.

Tying the two halves together

A pure brain signal can tell you "this ad is arousing in the amygdala." A pure social simulation can tell you "this ad generates a polarized response in the fitness community." Neither is enough on its own. The interesting product is the bridge — tracing a social reaction back to a neural signature and vice-versa.

Adneural's LangGraph diagnostic pipeline walks through the simulation output structurally instead of blobbing it into one big LLM prompt. Each cluster of reactions is matched against the neural heatmap. The final report reads like: "Cluster 3 (skeptical fitness enthusiasts) tracks with a predicted under-response in the reward circuit at second 4 of the ad — specifically the product-reveal frame." That's the signal advertisers pay for.

Under the hood — things I'm proud of

- Neo4j GraphRAG over 22 foundational neuroscience papers grounds the LLM's explanations in real literature. If you ask "why does this ad hit the hippocampus," the answer cites a specific paper, not a hallucination. This is a textbook GraphRAG application — the graph captures which cortical region is described in which paper, and in what context.

- LangGraph structured diagnostic analysis means the simulation-to-report step is a DAG, not a single prompt. Each node is inspectable, testable, and swappable.

- Three.js whole-brain visualization took longer to get right than anything else and it's the part everyone remembers from a demo.

Stack

| Layer | Tech | |---|---| | Frontend | Next.js 15, React 19, Three.js | | Backend | FastAPI, OpenRouter | | Graph | Neo4j (GraphRAG) | | Orchestration | LangGraph | | Brain model | Meta TRIBE v2 (GPU service, internal RPC) | | Agent simulation | MiroFish + OASIS (GPU service, internal RPC) |

Key takeaways

- Combining a neural encoder with a social simulation is the product, not either half alone.

- Grounding LLM output in a Neo4j knowledge graph is the difference between a demo and a platform you'd pay for.

- Early demos should visualize, not tabulate — the Three.js brain animation is what closes the room.

What's next

If you're in ad-tech, applied neuroscience, or just like the idea of simulating a crowd of 200 opinionated agents arguing about your TV spot — come talk to me. The repo is at github.com/OmarMusayev/Adneural. See also: my other projects and the home page for background.

References

- Meta AI — Introducing TRIBE v2

- Meta FAIR — TRIBE v2 on GitHub

- arXiv — TRIBE: TRImodal Brain Encoder for whole-brain fMRI response prediction

- arXiv — OASIS: Open Agent Social Interaction Simulations with One Million Agents

- MiroFish — GitHub repository

- Neo4j — What is GraphRAG?

Frequently Asked Questions

What is Meta TRIBE v2 and why does it matter for marketing?

TRIBE v2 is a foundation model from Meta's FAIR team that predicts voxel-level fMRI brain responses to video, audio, and text. For marketing, it lets you measure predicted attention, reward, and memory-encoding signals for an ad before you spend a dollar on media — replacing slow, expensive focus groups with a fast neural signal.

How does Adneural use MiroFish and OASIS?

MiroFish is a multi-agent prediction engine that builds a parallel digital world around a stimulus. OASIS provides the scalable social substrate. Adneural spins up 200 personality-diverse agents on top of that substrate, shows them the ad, and records who shares, who pushes back, and which clusters of reaction emerge.

Why combine neural encoding with a social simulation?

A brain signal alone tells you an ad feels arousing in a specific cortical region. A social simulation alone tells you an ad polarizes people. Combining both lets you trace a social reaction back to a neural cause, which is the signal advertisers actually care about.

How accurate is Adneural compared to traditional ad testing?

Adneural is a research platform, not a replacement for human panels. The value is speed and coverage — testing hundreds of creative variants in hours instead of running post-launch diagnostics on the single version that shipped. Early results show directional agreement with pre/post campaign KPIs on the pilot creatives.

What is the Adneural tech stack?

Next.js 15 with React 19 on the frontend, FastAPI on the backend, OpenRouter for model routing, Neo4j for the GraphRAG layer, LangGraph for structured diagnostic analysis, Three.js for the 3D brain visualization, and internal RPC to GPU-bound services running TRIBE v2 and the MiroFish agent pool.

Is Adneural open source?

The core repo is on GitHub at github.com/OmarMusayev/Adneural. It's early — pieces work, pieces embarrassingly don't — and feedback from anyone in ad-tech, applied neuroscience, or multi-agent systems is very welcome.